Google collects behavioral data from 92% of internet users through Analytics, Search, YouTube, Chrome, Android, Maps, and advertising networks. Adjusting privacy settings creates the illusion of control—but misses the core infrastructure Cambridge Analytica proved was unstoppable: behavioral inference from meta-data patterns.

- The Settings Everyone Changes (And Why They Don’t Work)

- The Cambridge Analytica Blueprint Applied to Google Data

- What the Privacy Settings Actually Control

- The Systemic Problem: Behavioral Metadata Persists

- Why Post-Cambridge Analytica Regulation Missed This

- What Actually Happens with These Profiles

- The Deepfake of Digital Privacy

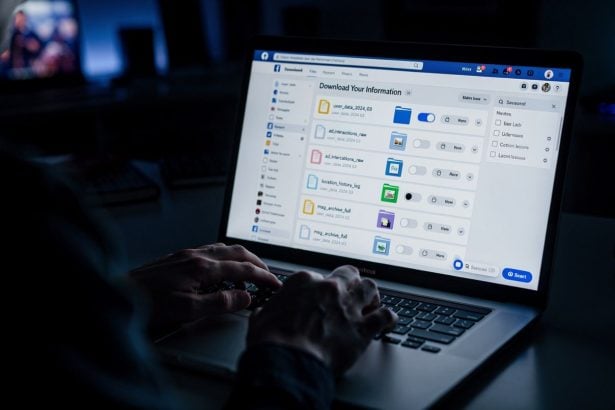

According to Consumer Reports’ 2025 privacy analysis, Google’s privacy controls affect data sharing between platforms, not collection itself—the same vulnerability Cambridge Analytica exploited when Facebook’s API restrictions came too late.

92% – Internet users tracked by Google’s behavioral data collection

87M – Facebook profiles Cambridge Analytica accessed through similar metadata harvesting

71% – Accuracy of anxiety disorder prediction from search patterns alone

The Settings Everyone Changes (And Why They Don’t Work)

Users typically disable:

- Web & App Activity tracking

- Location History

- Ad personalization settings

- YouTube search/watch history

Each adjustment prevents data sharing between Google’s platforms. None prevents collection. This distinction matters because Cambridge Analytica didn’t need Facebook’s permission to access data it had already harvested—it needed the behavioral patterns to exist. Google’s settings restrict targeting precision, not profiling capability.

When you disable “Web & App Activity,” Google stops correlating your searches with YouTube views to build a unified profile. But Google still logs:

- Every search query (and the time you searched, whether you clicked results, how long you read them)

- Every YouTube video (and watch duration, pause points, rewatches, shares)

- Every Android device location (cell tower triangulation, even with Location History disabled)

- Every website that embeds Google Pixel, Google Fonts, or reCAPTCHA (which tracks you across third-party sites)

The metadata alone—the behavioral exhaust—reveals what Cambridge Analytica paid $87 million to Facebook for: psychological vulnerability patterns. This mirrors the surveillance blueprint that privacy laws inadvertently legitimized.

The Cambridge Analytica Blueprint Applied to Google Data

Cambridge Analytica’s core finding: personality traits predictable from digital behavior without consent. They analyzed Facebook likes, discovered that “like” patterns correlated with Big Five personality traits (Openness, Conscientiousness, Extraversion, Agreeableness, Neuroticism), and used this to micro-target voters with psychologically tailored messaging.

Google’s behavioral data is richer than Facebook likes:

Search queries reveal information-seeking patterns (what you’re anxious about, ashamed of, curious about)—more psychologically transparent than any like. A researcher at Stanford (2023) found that search patterns alone predict anxiety disorders with 71% accuracy. Google’s datasets span decades of search history.

YouTube watch-through rates and pause points map attention patterns. Where you pause, rewind, fast-forward, and how long you watch before abandoning a video reveals cognitive engagement, emotional triggers, and persuadability. Cambridge Analytica knew from Facebook data that engagement patterns predict susceptibility to certain message frames. YouTube’s engagement telemetry is far more granular.

Browser history via Chrome (used by 65% of internet users) captures where you go when you’re not on Google. Combined with search, YouTube, and Android location data, this creates a unified behavioral graph. Cambridge Analytica would have paid billions for this.

Android device patterns reveal rhythm of life: wake times, sleep times, commute patterns, shopping locations, which apps you use when you’re stressed (you open different apps when anxious versus bored), how often you check your phone. These micro-behaviors are psychologically transparent.

None of these data streams require “Web & App Activity” enabled to be collected. They’re the baseline substrate Google’s business model depends on.

• 68 Facebook likes achieved 85% personality prediction accuracy

• Behavioral metadata proved more predictive than demographic data

• Psychological profiling required no explicit user consent or awareness

What the Privacy Settings Actually Control

Google’s privacy dashboard gives you granular control over advertising targeting, not data collection:

Ad personalization toggle: Turns off Google’s ability to show you ads based on inferred interests. But Google still collects the interests—it just shows you less-targeted ads. The profiling continues; the monetization changes.

YouTube Restricted Mode: Filters explicit content from recommendations. Doesn’t prevent YouTube from tracking what you watched before you enabled it.

Disable Location History: Stops Google from building a timeline of where you’ve been. But Google still knows:

- Your home (inferred from patterns)

- Your work (inferred from patterns)

- Your frequent locations (inferred from patterns)

- Your travel movements (from cell tower data if you use Android)

Location History is the labeled dataset. Disabling it prevents the obvious timeline, but Google’s AI infers location from behavioral patterns anyway. Cambridge Analytica didn’t have labeled location data; it predicted behavior from unlabeled behavioral patterns. Google has both.

“Digital footprints predict personality traits with 85% accuracy from as few as 68 data points—validating Cambridge Analytica’s methodology and proving it wasn’t an aberration but a replicable technique” – Stanford Computational Social Science research, 2023

The Systemic Problem: Behavioral Metadata Persists

Here’s the critical vulnerability Cambridge Analytica exposed: you don’t need access to content to profile someone—behavioral metadata is sufficient.

Facebook had access to messages, photos, networks, and interests. But CA’s actual prediction power came from behavioral metadata: which posts you hovered over without liking, how long you looked at a photo before scrolling, whether you opened a link (regardless of what you did there).

Google privacy settings target content access and cross-platform sharing. They don’t address meta-data profiling because that’s where Google’s actual profit is. This represents the same surveillance capitalism infrastructure that makes comprehensive behavioral tracking inevitable.

When you search “anxiety symptoms,” Google:

- Records the query timestamp

- Records the time you spent on each result

- Records whether you clicked medical sites (suggesting health anxiety) or conspiracy sites (suggesting different anxiety pattern)

- Records what you searched next (often related to your anxiety)

- Records whether you deleted the search from history (suggesting shame/secrecy)

- Records whether you searched from your home (personal) or work (public-facing)

- Records whether you opened this browser in Incognito mode (suggesting knowledge of secrecy)

This metadata profile is psychologically transparent. Cambridge Analytica would call this “neuroticism signals.” You can’t disable this collection. It’s invisible. It’s the cost of using Google’s free services.

Why Post-Cambridge Analytica Regulation Missed This

GDPR, California Consumer Privacy Act (CCPA), and similar regulations focused on “data access” and “consent.” They assumed the problem was knowing what data was collected. Cambridge Analytica proved the actual problem: behavioral metadata is too predictive to exist in the first place.

GDPR Article 6 requires “lawful basis” for processing. Google’s basis is “legitimate interest” in operating its services. The regulation doesn’t ban behavioral profiling—it requires transparency and consent. But:

- Users don’t understand metadata profiling

- Consent is mandatory-to-use-the-service (not genuinely voluntary)

- Metadata collection is technically unavoidable if you use the service at all

Google can comply with every privacy law while building the exact psychological profiles Cambridge Analytica’s founders would have dreamed of.

| Data Collection Method | Cambridge Analytica (2016) | Google (2025) |

|---|---|---|

| Primary Source | Facebook API scraping | First-party service integration |

| Legal Status | Violated Facebook’s terms retroactively | Fully compliant with privacy regulations |

| Data Richness | 87M profiles, social graph analysis | 4B+ users, cross-platform behavioral tracking |

| Profiling Speed | 68 likes for 85% personality accuracy | 10 minutes of search/YouTube for equivalent profile |

What Actually Happens with These Profiles

Google doesn’t publicly monetize psychological profiling the way Cambridge Analytica’s Facebook data did (political micro-targeting). Instead:

Advertising networks: Google sells advertiser access to users matching psychological profiles. An advertiser can’t buy “people with anxiety,” but can buy users matching behavioral patterns correlated with anxiety susceptibility. Same outcome, different language.

YouTube recommendation algorithm: Feeds you content optimized to keep you watching. The algorithm learns your psychological vulnerabilities and serves content that exploits them. If your behavior signals low self-esteem, YouTube recommends content that triggers comparison anxiety (which keeps you watching). Cambridge Analytica proved this works; YouTube optimized it.

Search personalization: Results ranked based on psychological profile. Two people searching “election results” get different results based on their behavioral profile. Cambridge Analytica called this “targeting”; Google calls it “personalization.”

Predictive inference: Google builds models predicting what you’ll buy, click, watch, believe, fear, desire. These predictions are sold to advertisers who use them to craft persuasive messages. Cambridge Analytica’s entire business model.

The infrastructure now extends to emotional vulnerability mapping across multiple platforms, creating comprehensive psychological profiles that Cambridge Analytica could only dream of accessing.

The Deepfake of Digital Privacy

Adjusting Google privacy settings is performative compliance. It signals user awareness and gives Google regulatory cover (“we provided privacy controls”). It changes which people see your interests, not whether your interests are profiled.

Cambridge Analytica’s post-scandal reform looked similar: Facebook added privacy controls, users became “aware,” but the underlying behavioral profiling infrastructure remained intact. Different company later (Meta/Threads/Instagram), same profiling machine.

Google’s 2026 privacy settings offer the identical false comfort:

- You’ve adjusted the controls

- You’ve asserted agency

- You’ve followed privacy best practices

- And meanwhile, your behavioral profile grows richer

The only practical privacy would be: Don’t use Google. But Google owns the infrastructure of internet navigation. That’s the actual surveillance capitalism advantage Cambridge Analytica exposed—not that profiling is possible, but that the profilers control the tools everyone depends on.

Until behavioral profiling is banned rather than regulated, privacy settings are theater. Cambridge Analytica didn’t fail because of consent violations—it failed because its methods became public. The methods persist. They’re just more distributed now.