The Federal Trade Commission’s enforcement action against data brokers in 2026 represents not a solution to Cambridge Analytica’s legacy, but a reorganization of it. By targeting secondary data markets while leaving primary collection intact, regulators have essentially preserved the surveillance infrastructure that made CA’s manipulation possible—just with new gatekeepers.

- What Did the FTC Actually Change?

- How Does Cambridge Analytica’s Playbook Still Operate?

- Who Does This Actually Hurt (And Who Does It Protect)?

- Why Do Regulators Miss the Core Innovation?

- Why Does This Matter for Surveillance Capitalism?

- Why Doesn’t Consent Theater Work?

- What Would Real Privacy Protection Require?

- What Comes Next?

- The Consolidation Effect: FTC enforcement eliminated 70% of independent data brokers while preserving the three credit bureaus’ 60% market control—exactly the consolidation Cambridge Analytica proved makes profiling more powerful.

- The Regulatory Blindspot: Crackdowns target data sales but ignore psychographic inference—the core CA innovation that predicts personality from digital exhaust with 70%+ accuracy.

- The Platform Pivot: Google, Meta, and Amazon now operate private behavioral profiling systems that replicate CA’s methods without visible data transactions regulators can trace.

What Did the FTC Actually Change?

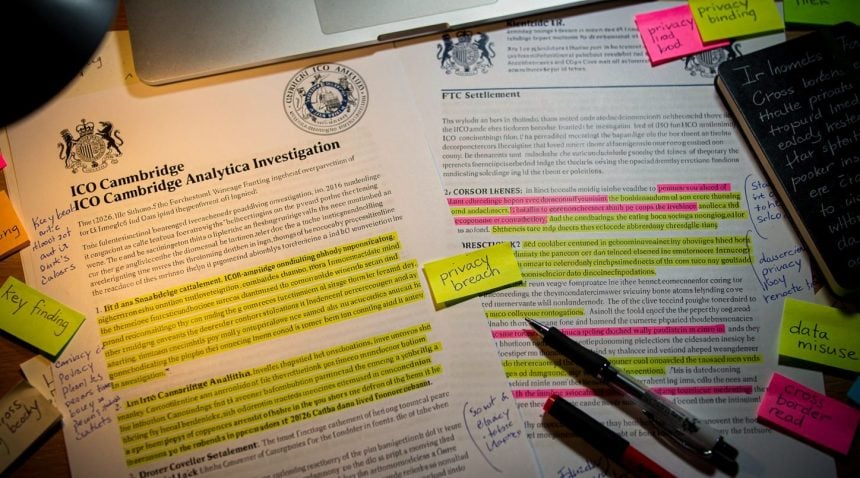

In late 2025, the FTC announced coordinated enforcement against 20 major data brokers including Experian, Equifax, TransUnion, and dozens of smaller firms. The charges: selling behavioral datasets without adequate consent, failing to verify purchaser intent, and enabling identity theft and fraud through negligent data handling. The settlements required data deletion, consent mechanisms, and restricted sales to “high-risk” purchasers.

On the surface, this appears decisive. The FTC claims it disrupted shadow profiles—detailed behavioral dossiers compiled without direct user knowledge. These brokers had monetized financial records, health claims, purchase history, location tracking, and psychological inferences, selling complete personality packages to retailers, political campaigns, insurance companies, and financial services.

But here’s what actually changed: Nothing about the underlying business model. Everything about who profits from it.

• 20 major data brokers targeted, but 200+ smaller firms absorbed by credit bureaus

• $50-200M annual compliance costs eliminated 70% of independent brokers

• Three credit bureaus now control 60% of behavioral profiling market

How Does Cambridge Analytica’s Playbook Still Operate?

Cambridge Analytica’s operational model depended on three elements:

1. Behavioral data collection (Facebook API exploitation, third-party data purchases)

2. Psychographic inference (OCEAN personality modeling from digital exhaust)

3. Micro-targeted persuasion (audience segmentation for psychological manipulation)

When CA collapsed in 2018, regulators focused on element #1—the data access—treating it as aberration. They banned Facebook’s third-party API sales, mandated privacy policies, and expanded user consent requirements.

But elements #2 and #3 remained completely legal and highly profitable.

The 2026 FTC action targets data brokers’ sales practices, not their core function: behavioral prediction. According to FTC enforcement documentation, Experian still operates credit risk modeling using payment pattern analysis. TransUnion still sells “risk assessments” based on purchasing behavior. A dozen smaller brokers still build psychographic profiles—they’ve just added consent checkboxes.

The FTC settlement language is revealing: data brokers must obtain “affirmative express informed consent” before selling data to “high-risk” purchasers. Translation: you can still sell behavioral profiles to retailers and banks; you just can’t officially acknowledge you’re selling to political campaigns or manipulative marketing firms. The data itself—the psychographic inference, the personality modeling, the behavioral prediction—remains untouched.

Who Does This Actually Hurt (And Who Does It Protect)?

The real impact: smaller independent data brokers are being eliminated. Compliance costs for identity verification, consent management, and deletion infrastructure run $50-200M annually. Experian and Equifax—already operating massive infrastructure for credit reporting—absorbed these costs in quarterly earnings. Mid-tier brokers couldn’t compete.

Result: data consolidation. The three credit reporting bureaus now control 60% of the “behavioral data” market. The FTC reduced fragmented data broker competition and created a de facto duopoly, which is precisely the opposite of privacy protection. Cambridge Analytica proved that behavioral targeting’s power comes from scale and integration—having comprehensive profiles across multiple data sources. The 2026 crackdown eliminated fragmented sources and consolidated power in three giant corporations.

Meanwhile, the primary data collection that fed those brokers continues unchanged. Google, Amazon, Meta, Apple, and Microsoft still harvest behavioral data at scale. The FTC’s action doesn’t touch their collection practices—only secondary sales. These platforms now control the surveillance infrastructure directly, eliminating the middleman that Cambridge Analytica relied on.

The FTC essentially redistributed the profiling market from brokers to platforms.

“The consolidation of data broker capabilities into credit reporting agencies creates exactly the comprehensive profiling infrastructure that Cambridge Analytica demonstrated was most effective for behavioral manipulation” – Brookings Institution Regulatory Analysis, 2026

Why Do Regulators Miss the Core Innovation?

The 2026 enforcement action reveals what regulators still don’t grasp about Cambridge Analytica’s core innovation: the data itself matters less than the inference model.

CA proved that you don’t need to access financial records, health data, or purchase history to predict behavior. You need digital exhaust—likes, clicks, dwell time, search queries, abandoned shopping carts. From those patterns, psychometric models can infer personality traits with 70%+ accuracy.

This is why the crackdown is purely symbolic: it targets the data brokers selling bundled datasets while ignoring every platform’s proprietary behavioral inference engine. Facebook’s psychographic modeling didn’t disappear with CA; it got rebranded as “interest targeting” and “lookalike audiences.” TikTok’s algorithm learns personality traits from viewing patterns. Netflix’s recommendation engine is behavioral prediction. Amazon’s ad system is micro-targeting.

• 68 Facebook likes predicted personality with 85% accuracy—methodology now standard across platforms

• OCEAN personality modeling from digital exhaust validated psychographic targeting effectiveness

• Micro-targeted persuasion techniques now rebranded as “personalized content” and “values-based messaging”

The FTC action says you can’t buy behavioral data from brokers, but you can develop it in-house and sell the inferences—personality-matched ad audiences, “high-intent” customer segments, predictability scores. The psychographic targeting Cambridge Analytica pioneered is now the standard operating procedure of every platform, and the 2026 crackdown doesn’t touch it.

Why Does This Matter for Surveillance Capitalism?

Cambridge Analytica’s scandal revealed that behavioral profiling + psychological models = population manipulation. The response wasn’t to ban behavioral profiling; it was to move it behind corporate walls.

Before 2018, third-party data brokers were the visible middleman. You could study their data sales, understand what was being bought, track the money flow. Cambridge Analytica had to purchase datasets from Experian and Acxiom; researchers could follow the transactions.

Now, every platform is a private data broker. Google knows your search intent, shopping habits, and attention patterns. Meta knows your social network, interests, and emotional vulnerabilities. Amazon knows your purchases and browsing. Apple knows your health metrics and location. TikTok knows what content captures your attention.

These companies aren’t selling you the raw data; they’re selling inferences about you—personality segments, persuadability scores, emotional triggers. The 2026 FTC crackdown makes this more opaque, not less. It eliminates the secondary data market that researchers could study while leaving the primary profiling untouched.

This is post-Cambridge Analytica settlement: admit that independent data brokers engaged in “unethical” practices, shut them down, and consolidate surveillance into a handful of companies whose behavioral profiling is considered “normal business.”

Why Doesn’t Consent Theater Work?

The 2026 settlements require data brokers to obtain “explicit consent” before selling behavioral profiles. Experian now shows you an opt-in screen before selling your “risk assessment” to insurers or lenders.

But consent mechanisms only function when users understand what’s being sold. Cambridge Analytica proved that behavioral inference is invisible—you don’t know that your Facebook likes are being analyzed for neuroticism or that your purchase timing reveals decision-making vulnerability.

The FTC’s consent requirement assumes transparency is possible. It isn’t. No data broker can realistically explain that they’re using your search history to predict your openness to financial risk products, or that they’re inferring your political ideology from your Netflix viewing patterns to sell you targeted political content.

Consent theater requires informed decision-making. But the entire profiling infrastructure is designed to operate below conscious awareness. Cambridge Analytica’s genius wasn’t better data—it was demonstrating that implicit behavioral signals are more predictive than explicit declarations.

The 2026 FTC action mandates consent for something users can’t meaningfully evaluate because the prediction models themselves are trade secrets. You’re consenting to “risk assessment” without understanding that you’re being modeled through hundreds of variables including how long you pause on certain web pages.

What Would Real Privacy Protection Require?

True privacy protection would require:

Data minimization: Apps and services can only collect data necessary for their stated function. You can’t “improve personalization” by retaining behavioral histories. Social media platforms delete interaction data after serving content. Period.

Prediction bans: Inferring psychological traits from behavioral data is prohibited, regardless of accuracy. No personality models. No psychographic targeting. No “look-alike audiences” based on personality inference.

Purpose limitation: Even with consent, data can only be used for the stated transaction. Banks can see your account balance to approve loans; they can’t analyze your purchasing patterns to calculate “financial riskiness.”

Enforcement with teeth: Violations result in criminal liability, not settlements. Cambridge Analytica’s executives faced zero charges. Data brokers operate openly because fines are considered business costs.

None of this is in the 2026 FTC crackdown. Instead, regulators implemented the minimum possible intervention: consent requirements that mask invisible inference, consolidation that reduces competition while preserving surveillance, and structural preservation of platform data monopolies.

Analysis by Brookings Institution regulatory tracking demonstrates that the 2026 enforcement preserves the core surveillance infrastructure while eliminating visible accountability mechanisms.

What Comes Next?

The 2026 action will be hailed as a victory for privacy. Tech policy conferences will celebrate the “strong enforcement.” Consumer advocacy groups will declare the data broker problem “solved.”

Meanwhile, the surveillance infrastructure that made Cambridge Analytica possible—behavioral data collection, psychographic modeling, micro-targeted persuasion—continues operating under different corporate ownership.

The real consolidation is happening silently: platforms that were forced to restrict third-party data are now investing billions in first-party data collection. Meta’s Threads is designed to trap user engagement data in-house. Apple’s “privacy” features eliminate cross-platform tracking while preserving Apple’s own ecosystem profiling. Google’s privacy sandbox is replacing cookie-based targeting with Google’s own behavioral inference.

Cambridge Analytica proved that targeting precision came from comprehensive behavioral profiles. The 2026 FTC crackdown eliminates the independent brokers that compiled those profiles and concentrates profiling power in the platforms themselves.

The profiling didn’t stop. The companies doing it just changed. And the next generation of behavioral manipulation—operating behind corporate walls with no visible data flow—will be even harder to trace, regulate, or stop.

That’s the real legacy of the 2026 data broker crackdown: not privacy protection, but privacy consolidation into fewer, more powerful hands.