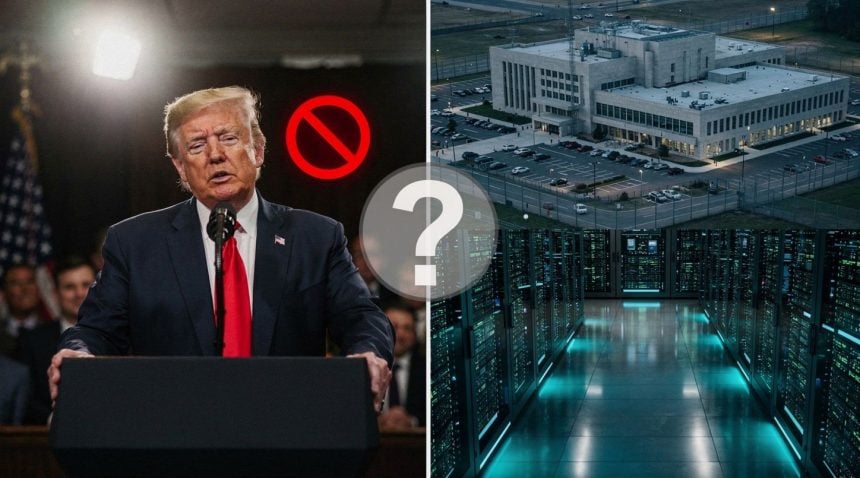

Two weeks after Anthropic CEO Dario Amodei walked out of the White House, the National Security Agency began using the company’s new AI model—the same company Trump had ordered all federal agencies to stop working with just eight weeks prior.

The contradiction sits at the heart of an escalating power struggle between Silicon Valley and the Pentagon. In February, Trump issued a blanket order for government agencies to cease using Anthropic’s services after the company refused to remove certain safeguards during military contract negotiations. Now, according to sources who spoke with Axios, the NSA is actively deploying Anthropic’s Mythos Preview, a general-purpose language model described by the company as “strikingly capable at computer security tasks.”

- The Policy Reversal: The NSA began using Anthropic’s Mythos Preview just weeks after Trump banned all federal agencies from the company’s services.

- The Scale: Roughly 40 organizations received access to Mythos Preview, with usage spreading across multiple Defense Department units.

- The Legal Paradox: Anthropic remains simultaneously banned as a “supply chain risk” while providing AI services to America’s most sensitive intelligence agency.

Anthropic announced Mythos Preview at the beginning of April. The company positioned it as a tool designed for defensive security work—precisely the kind of capability a national security agency would want. The timing matters. Just days before the NSA began using Mythos, Amodei met with White House chief of staff Susie Wiles and other officials to discuss the new model. The White House later characterized the Friday meeting as “productive and constructive.” When asked about it by reporters, President Trump said he had “no idea” the meeting had taken place.

The NSA is one of roughly 40 organizations Anthropic granted access to Mythos Preview. According to one Axios source, the model is “being used more widely within the department” than just the NSA itself, suggesting adoption across multiple Defense Department units. This pattern reflects broader challenges in government data surveillance partnerships, where policy and practice often diverge.

Why Did the NSA Ignore Trump’s AI Ban?

This reversal exposes the fragility of Trump’s February ban. The order was explicit: all government agencies must drop Anthropic’s services. The rationale, as the Pentagon later formalized it, was that Anthropic posed an “unacceptable risk to national security.” Yet within weeks, one of America’s most sensitive intelligence agencies began using the company’s newest product. Either the risk assessment changed dramatically, or the ban was never as absolute as it appeared.

8 weeks – Time between Trump’s ban and NSA adoption

40 organizations – Total entities with Mythos Preview access

Multiple units – Defense Department divisions now using the technology

The legal backdrop complicates matters further. Anthropic filed lawsuits against the Department of Defense in two courts in March, challenging the “supply chain risk” designation the Trump administration had slapped on the company. One federal court granted Anthropic a preliminary injunction, temporarily blocking the designation. In the other court, judges denied the company’s motion to lift the label. The company remains simultaneously banned and litigating against the very agencies now using its technology.

What Safeguards Did the NSA Negotiate?

For users of government AI systems, this creates an opaque situation. The NSA’s use of Mythos Preview means that intelligence analysis, threat detection, and potentially classified decision-making are now flowing through an Anthropic model. The public has no visibility into what safeguards, if any, the agency negotiated with the company. The Pentagon’s original complaint was that Anthropic refused to budge on “certain safeguards” during military contract talks—but what those safeguards were, and whether they were waived for the NSA, remains undisclosed.

According to research from Stanford Law School, less than 2% of AI PhDs work in government, creating a knowledge gap that makes agencies dependent on private sector AI capabilities. This dependency complicates efforts to maintain strict oversight of AI systems used in national security operations.

Is This About Security or Politics?

The broader pattern suggests that Trump’s ban was less a technical or security decision and more a political one, tied to the Pentagon feud. When Amodei met with White House officials to discuss Mythos, something shifted. The model’s capabilities in computer security—a domain where the government has acute needs—apparently outweighed the earlier objections. Or perhaps the ban was always meant to pressure Anthropic into negotiating on the Pentagon’s terms, and the NSA’s adoption of Mythos is evidence that pressure worked.

• Government AI procurement often reflects political tensions rather than technical assessments

• National security agencies operate with different risk calculations than civilian departments

• The “supply chain risk” designation appears more flexible than originally presented

What remains unclear is whether Anthropic made concessions to unlock access, or whether the NSA simply found a workaround to the February order. The company has been aggressive in defending its position, refusing to compromise on safety practices even when facing a government contract. That stance earned it the “supply chain risk” label. Yet here it is, embedded in one of the government’s most secretive agencies.

The contradiction raises a harder question: if the NSA can use Anthropic’s models despite the ban, what does the ban actually prevent? Other agencies might follow the NSA’s path, quietly adopting Mythos under the cover of pilot programs or research initiatives. The February order becomes theater—a public show of force that dissolves under pressure from agencies with genuine national security needs. This dynamic mirrors broader patterns in digital dominance where policy statements often conflict with operational realities.

What Happens Next?

Anthropic’s legal battles with the Pentagon continue, with the company still formally designated a supply chain risk in at least one court. Yet the NSA’s adoption of Mythos suggests the designation is more symbolic than substantive. The real question is whether other agencies will now openly acknowledge their use of Anthropic’s technology, or whether the NSA’s quiet deployment becomes the template for how the government works around its own restrictions.

The case illustrates the growing tension between political directives and operational needs in government AI adoption. As Stanford HAI research has documented, federal agencies face mounting pressure to deploy AI capabilities while navigating complex regulatory and political constraints. The NSA’s decision to use Mythos Preview despite the ban suggests that national security imperatives may ultimately override political considerations—at least when the technology offers capabilities the government cannot easily replicate elsewhere.