Americans tell pollsters they care deeply about digital privacy, yet they continue using platforms that harvest their data extensively. This disconnect—dubbed the “privacy paradox”—has profound implications for tech regulation, as policymakers struggle to write laws for constituents whose stated preferences don’t match their actual behavior.

- The Numbers Don’t Add Up

- Why Do Stated Preferences Diverge from Actions?

- How Are Regulators Responding to the Paradox?

- Industry Adaptation and Resistance

- What Enforcement Challenges Does the Paradox Create?

- Global Regulatory Divergence

- What the Paradox Reveals About Future Regulation

- Measuring Success Beyond User Choice

- The Behavioral Gap: 81% of Americans say data collection risks outweigh benefits, yet only 22% have stopped using a service due to privacy concerns.

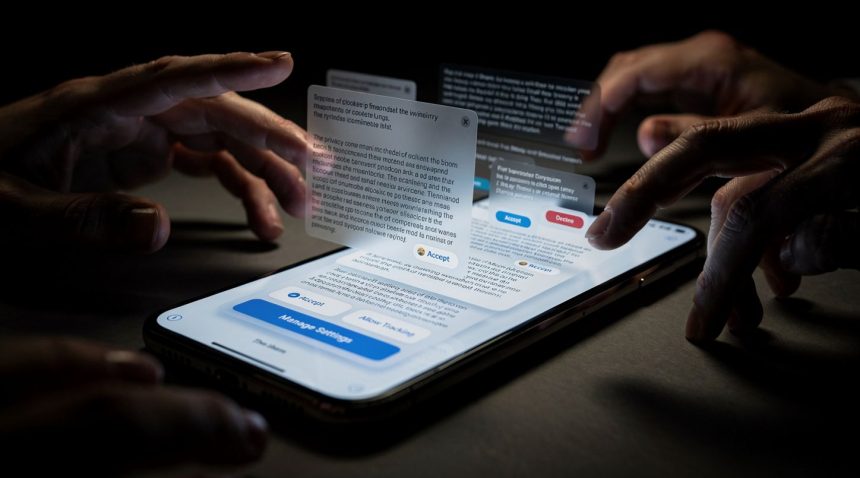

- The Consent Illusion: EU cookie acceptance rates remain above 85% despite GDPR requiring granular privacy controls.

- The Regulatory Shift: Policymakers are abandoning user choice frameworks in favor of “privacy by default” structural protections.

The Numbers Don’t Add Up

Pew Research Center data shows 81% of Americans believe the risks of corporate data collection outweigh the benefits. Meanwhile, 79% feel they have little control over how companies use their information. Yet the same surveys reveal that only 37% have adjusted their social media privacy settings in the past year, and just 22% have stopped using a service due to privacy concerns.

This behavioral gap creates a regulatory challenge. The California Consumer Privacy Act (CCPA) gives Californians the right to delete their personal data, but fewer than 3% of eligible users have submitted deletion requests to major platforms since the law took effect in 2020. The California Privacy Rights Agency reports that opt-out rates for data sales remain below 1% for most covered businesses.

The disconnect becomes starker when examining European data. Under the General Data Protection Regulation (GDPR), users must actively consent to data processing. Yet acceptance rates for tracking cookies remain above 85% across EU websites, according to Cookiebot analysis of 680,000 websites. Users click “Accept All” even when presented with granular privacy controls that GDPR Article 7 requires to be as easy to use as consent mechanisms.

• 81% of Americans say data collection risks outweigh benefits

• 3% of Californians have used CCPA deletion rights since 2020

• 85% of EU users accept tracking cookies despite GDPR protections

• 244 hours annually required to read all privacy policies encountered

Why Do Stated Preferences Diverge from Actions?

Behavioral economists identify several factors driving this paradox. Present bias leads users to prioritize immediate convenience over future privacy risks. The abstract nature of data harm makes privacy violations feel less threatening than tangible security breaches like credit card theft.

Cognitive load plays a major role. The average smartphone user encounters 40-60 privacy notices weekly, creating decision fatigue. Carnegie Mellon researchers found that reading every privacy policy encountered would require 244 hours annually—more than six full work weeks.

Platform design amplifies these psychological tendencies. Shadow tracking methods operate beyond user awareness, while dark patterns—manipulative user interface elements—steer users toward privacy-invasive choices. The Norwegian Consumer Council documented how Google, Facebook, and Microsoft use misleading language, visual hierarchy, and additional clicks to discourage privacy-protective settings.

The Federal Trade Commission has begun targeting these practices. In September 2024, the agency settled with Epic Games for $520 million, citing dark patterns that tricked users into making unwanted purchases and sharing personal information. The settlement specifically addressed “confirm-shaming”—using guilt-inducing language to discourage privacy-protective choices.

How Are Regulators Responding to the Paradox?

Policymakers increasingly recognize that privacy laws requiring active user choice may be fundamentally flawed. The EU’s proposed ePrivacy Regulation would establish privacy by default settings, removing many choices from users entirely. Under current drafts, browsers would reject tracking cookies automatically unless users specifically enable them.

The American Data Privacy and Protection Act, which passed the House Energy and Commerce Committee in 2022 before stalling, takes a similar approach. The bill would require “privacy by design” for new products and establish data minimization as a default rather than an option.

“The privacy paradox demonstrates that individual choice is an inadequate foundation for privacy protection. We need systemic solutions that don’t rely on users making perfect decisions under imperfect conditions.”

This quote from Shoshana Zuboff, author of “The Age of Surveillance Capitalism,” reflects growing consensus among privacy advocates that regulation must go beyond notice-and-consent frameworks.

State attorneys general have begun enforcing privacy laws without waiting for consumer complaints. California Attorney General Rob Bonta launched investigations into major platforms’ data practices in 2023, arguing that the privacy paradox shows users cannot meaningfully consent to surveillance business models they don’t fully understand.

Industry Adaptation and Resistance

Tech companies are adapting their arguments to exploit the privacy paradox. Meta told EU regulators in 2023 that high cookie acceptance rates demonstrate user preference for personalized advertising over privacy. The company argued that GDPR compliance costs—estimated at $5.5 billion since 2018—exceed user demand for privacy protection as measured by actual behavior.

Google has taken a different approach, positioning privacy as a premium service. The company’s Google One subscription includes enhanced privacy features like VPN access and advanced security monitoring. This model treats privacy as a luxury good rather than a fundamental right, potentially exacerbating digital inequities.

Apple leveraged the privacy paradox strategically with its App Tracking Transparency framework, which requires apps to ask permission before tracking users across other apps and websites. Only 25% of users opt into tracking when prompted, but Apple doesn’t apply the same standard to its own advertising platform, which relies on first-party data collection.

The move damaged competitors while positioning Apple as privacy-focused, demonstrating how companies can weaponize privacy concerns against rivals while maintaining their own data collection practices.

• Systematic literature reviews confirm the privacy paradox exists across cultures and demographics

• Users consistently overestimate their privacy knowledge and underestimate data collection scope

• Default privacy settings are 300% more effective than opt-in choice mechanisms

What Enforcement Challenges Does the Paradox Create?

The privacy paradox complicates enforcement of existing privacy laws. How do regulators prove harm when users voluntarily provided their data? The Irish Data Protection Commission faced this challenge in its €1.2 billion fine against Meta in 2023. Meta argued that users demonstrated consent through continued platform usage, even after privacy policy changes.

Court precedents are beginning to address this argument. In In re Facebook, Inc. Consumer Privacy User Profile Litigation, the Ninth Circuit ruled that privacy policies don’t constitute blanket consent for all data uses. The court found that users can reasonably expect some privacy even on platforms they use voluntarily.

The Washington State Attorney General won a similar victory against CenturyLink in 2022, with the court rejecting the company’s argument that customer sign-up demonstrated consent to unlimited data sharing. The $9 million settlement established that privacy violations can occur even when users technically agreed to terms of service.

Global Regulatory Divergence

Different jurisdictions are responding to the privacy paradox with varying approaches. The EU emphasizes structural solutions—breaking up surveillance business models rather than relying on user choice. The Digital Services Act and Digital Markets Act mandate algorithmic transparency and data portability regardless of user preferences.

China’s Personal Information Protection Law takes a paternalistic approach, restricting certain data practices even when users consent. The law prohibits excessive data collection and requires “separate consent” for sensitive information processing, essentially overriding user choices that regulators deem harmful.

The United States remains focused on sectoral regulation and state-level initiatives. California’s privacy framework and similar laws in Virginia and Colorado both include right-to-delete provisions, but early compliance data shows low consumer utilization rates similar to California’s experience.

What the Paradox Reveals About Future Regulation

The privacy paradox suggests that effective tech regulation cannot rely primarily on individual choice and market mechanisms. Users’ revealed preferences—their actual behavior—often conflict with their stated preferences in surveys and focus groups.

Behavioral insights are reshaping regulatory approaches. The UK’s Age Appropriate Design Code requires platforms to use privacy-protective settings by default for users under 18, acknowledging that young people cannot meaningfully consent to complex data processing. Early compliance data shows dramatic reductions in data collection from minors, suggesting default settings are more effective than choice mechanisms.

The Federal Trade Commission is developing similar approaches for adult users. In 2024 testimony before Congress, FTC Chair Lina Khan argued that the privacy paradox demonstrates market failure requiring structural remedies rather than disclosure requirements.

Measuring Success Beyond User Choice

Regulators are developing new metrics for privacy protection that don’t depend on user actions. The European Data Protection Board now tracks data collection volumes rather than complaint rates when assessing GDPR effectiveness. Total data collection by major platforms has decreased 23% since GDPR enforcement began, despite low user engagement with privacy controls.

Academic researchers propose focusing on “privacy outcomes” rather than “privacy choices.” Studies show users experience fewer privacy harms in jurisdictions with strong default protections, regardless of their stated preferences or active privacy management.

The privacy paradox ultimately reveals that meaningful digital rights require structural protections rather than individual choice architecture. As regulatory frameworks evolve beyond notice-and-consent models, policymakers must grapple with protecting users from their own documented inability to make privacy-protective decisions in complex digital environments.

The next phase of privacy regulation will likely embrace this paternalistic approach, setting technological standards that don’t depend on user sophistication or engagement. Whether this regulatory evolution can balance user protection with innovation and economic growth remains the central challenge for digital policy in 2026.