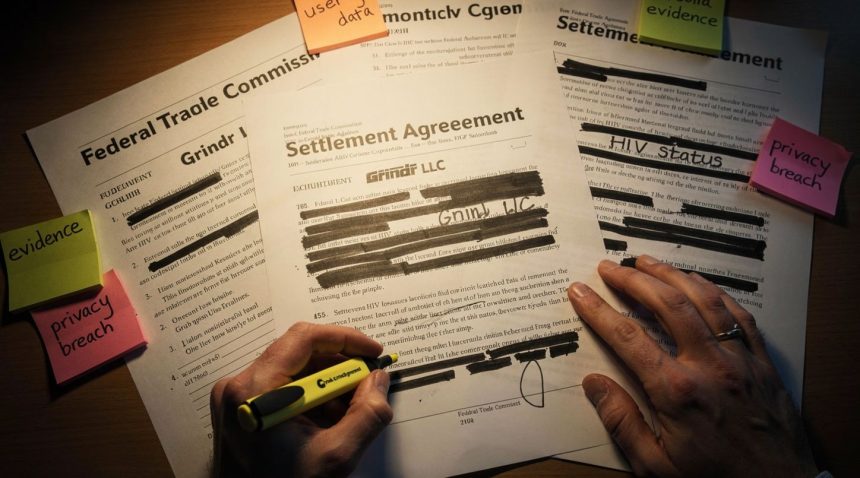

Grindr, the dating app used by 11 million monthly active users, faces a $20 million settlement with the Federal Trade Commission over selling sensitive health data to third-party companies without explicit user consent. The violation centers on HIV status information—data users provided in confidence—that reached advertisers, data brokers, and analytics firms.

Key Findings:

- The Data Pipeline: Grindr transmitted HIV status and health data to 135 external companies between 2017 and 2023.

- The Technical Method: The app used API calls and SDK integrations to obscure sensitive data transmission from user awareness.

- The Legal Precedent: Apps serving marginalized communities now face heightened FTC scrutiny for health data violations.

The Settlement and What Actually Happened

The FTC settlement, finalized in January 2026, documents a systematic practice spanning years. Grindr’s privacy policy claimed the company would not share “sensitive data” with third parties for advertising purposes. The reality proved different.

Between 2017 and 2023, Grindr transmitted HIV status, sexual preferences, location data, and other health indicators to at least 135 external companies. Recipients included mobile analytics firms like AppsFlyer and Singular, ad networks, location data brokers, and even social media platforms. Some partners used this information for targeted advertising. Others aggregated it into broader datasets sold to healthcare companies and researchers without consent mechanisms.

The mechanism was technical obfuscation. Grindr didn’t explicitly label HIV status fields when sending data to partners. Instead, the app transmitted the information alongside other user attributes in API calls and SDK integrations. Third-party companies could reverse-engineer the data structure and identify which users disclosed HIV status—or infer it from behavioral patterns.

• 135 external companies received sensitive health data from Grindr

• 6 years of systematic data sharing violations (2017-2023)

• $20 million total penalty: $15M civil payment + $5M consumer redress

The $20 million penalty includes a $15 million civil payment and $5 million for consumer redress. More significantly, the settlement requires Grindr to implement annual third-party security audits, obtain affirmative consent before sharing any health or sexual orientation data, and delete historical datasets containing sensitive information.

Why Does This Matter Beyond Grindr?

This case exposes how health data flows through the broader digital advertising ecosystem—often invisibly. Grindr is not unique; it’s representative.

The distinction matters: Grindr didn’t sell HIV status data to hospitals or insurance companies. That would trigger HIPAA (Health Insurance Portability and Accountability Act) protections. Instead, the company shared data with advertising and analytics partners, operating in a regulatory gray zone. Health information transmitted for “analytics” or “fraud prevention” purposes has historically faced lighter scrutiny than health information transmitted for medical treatment.

The FTC’s theory of the case relied on the FTC Act Section 5, which prohibits unfair or deceptive practices. The deception angle is straightforward: the privacy policy said no sharing; the app did share. The unfairness angle is more sophisticated. The FTC argues that transmitting HIV status data to unvetted third parties without consent creates substantial injury—psychological harm from exposure, discrimination risk, stalking risk—that users couldn’t reasonably avoid.

This reasoning extends to any app collecting sensitive health, sexual orientation, or financial data. It means that apps collecting data about fertility, addiction recovery, mental health, abortion care, or other sensitive domains face similar exposure. The FTC’s position: if your privacy policy creates a reasonable expectation of confidentiality, you cannot violate it for operational convenience or revenue.

What Does the Enforcement Pattern Reveal?

This settlement reflects a shifting FTC enforcement posture under chair Lina Khan, appointed in 2021. The agency has prioritized privacy violations involving marginalized communities and sensitive health data.

The 2023 settlement with Amazon ($25 million) focused on Alexa data retention practices affecting all users. The 2024 action against Twitter/X examined data practices affecting hundreds of millions. The Grindr case, by contrast, targets a smaller platform with a specific user base but uses enhanced penalty theories.

The FTC argument boils down to this: apps targeting marginalized communities—LGBTQ+ users, in Grindr’s case—bear heightened responsibility for data protection because the harms from exposure are more severe. An ad tech company knowing your sexual orientation creates different risks than knowing your coffee preferences.

• Apps serving vulnerable populations face enhanced FTC scrutiny for data protection failures

• “Analytics” and “fraud prevention” justifications no longer shield sensitive data sharing

• Privacy policy promises create legally enforceable expectations of confidentiality

This reasoning may influence enforcement against other apps serving health-conscious or vulnerable populations. Apps focused on abortion providers, HIV prevention, mental health treatment, addiction recovery, or reproductive health should expect heightened scrutiny. The baseline expectation: “We share data for analytics” no longer passes legal muster when the data is sensitive.

The FTC’s 2022 lawsuit against Kochava for selling geolocation data from reproductive health clinics demonstrates this pattern extends beyond dating apps to any platform handling sensitive location or health information.

What Users Should Do Now

For current and former Grindr users, the settlement creates limited immediate remedies but establishes preventive principles.

For historical exposure: Users who have Grindr accounts created between 2017 and 2023 may qualify for the $5 million consumer redress pool. The FTC settlement website provides claim submission details. Amounts per claimant typically range from $50 to $500 depending on claim volume. This redress acknowledges actual harm but does not eliminate it—reputational damage, harassment, or discrimination resulting from data exposure cannot be quantified or reversed through payment.

For ongoing use: The settlement requires Grindr to implement affirmative opt-in consent before sharing health or sexual orientation data with any third party. Users should review updated privacy settings within the app and explicitly deny consent to data sharing unless the user trusts the specific purpose.

For broader defense: Users concerned about health data exposure should examine permissions for any health, dating, or sensitive-domain apps. Check:

- Which third-party SDKs the app integrates (visible through app permission requests)

- The privacy policy’s language about “analytics,” “fraud prevention,” and “service improvement”

- Whether the app stores location data and how it handles location history

- Whether the app claims to offer “anonymized” data sharing (anonymization standards vary widely and are often ineffective)

Apps claiming “encryption” warrant skepticism. Encryption protects data in transit but says nothing about third-party access once data arrives unencrypted on company servers.

What Comes Next for Health Data Protection?

The Grindr settlement establishes a precedent that will shape enforcement through 2026 and beyond. Expect:

Broader app audits: The FTC has signaled intent to examine health and dating apps systematically. Other platforms with significant sensitive data flows face examination.

State-level actions: Attorneys general in states with strong privacy laws—California, New York, Illinois—may pursue similar cases. New York’s enforcement record on health data privacy is particularly aggressive.

European enforcement: GDPR Article 9 treats health data as special category data requiring heightened protection. European regulators have already fined platforms for health data violations. The Grindr case may inform EU decisions.

Industry technical standards: The settlement does not require Grindr to implement specific data minimization practices—only consent and auditing. This creates ongoing risk. The next phase of enforcement may demand technical controls: disabling third-party data transmission by default, restricting SDK integrations, or implementing differential privacy techniques that degrade precision in shared datasets.

Users benefit from these enforcement actions only when they understand the mechanisms at stake. The news isn’t that Grindr failed to protect data. The news is that the app industry’s standard practices—analytics SDKs, ad networks, data brokers—create inherent risks for sensitive information that legal language alone cannot address. Personal data insurance may become necessary as these risks expand across the digital ecosystem.